- 1 Post

- 29 Comments

1·7 months ago

1·7 months agoIt can answer questions as well as any person.

The 7th grader and plagiarism comment make me think you haven’t played with them much or really tested them.

Of course I have, my employer has me shoehorning ChatGPT into everything, and I agree with what the research says: Children can answer questions better than LLMs can.

https://techxplore.com/news/2023-12-artificial-intelligence-excel-imitation.html

Stochastic plagirism is still plagirism.

1·7 months ago

1·7 months agoBut they don’t “answer questions”, they just respond to prompts. You can’t use them to learn anything without checking their responses against authoritative sources you should have used in the first place.

There’s no intelligence there, just a plagirism laundromat and some rules for formatting text like a 7th grader.

1·7 months ago

1·7 months agoAuto complete is not a lossy encoding of a database either, it’s a product of a dataset, just like you are a product of your experiences, but it is not wholly representative of that dataset.

If LLMs don’t encode their training data, then why are they proving susceptible to data exfiltration techniques where they output the content of their training dataset verbatim? https://m.youtube.com/watch?v=L_1plTXF-FE

1·7 months ago

1·7 months agoIf LLMs were just lossy encodings of their database they wouldn’t be able to answer any questions outside of there training set.

Of course they could, in the same way that hitting the autocomplete key can finish a half-completed sentence you’ve never written before.

The fact that models can produce useful outputs from novel inputs is the whole reason why we build models. Your argument is functionally equivalent to the claim that wind tunnels are intelligent because they can characterise the aerodynamics of both old and new kinds of planes.

How do you explain the hallucinations if the llm is just a complex lookup engine? You can’t lookup something you’ve never seen.

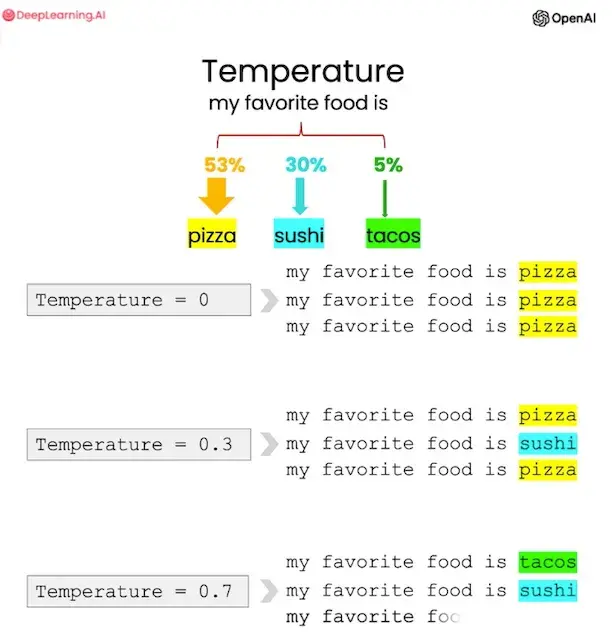

For the same reason that a random number generator is capable of producing never-before-seen strings of digits. LLM inference engines have a property called “temperature” that governs how much randomness is injected into their responses:

1·7 months ago

1·7 months agoBut it is, necessarily.

For example, when we make shit up, we’re aware that the shit we made up isn’t real. LLMs are structurally incapable of recognizing the distinction between facts they regurgitate and the ones they manufacture from whole cloth.

You didn’t have to consume terabytes of text to build a model for how to form sentences like a human, you did that with a few megabytes of overheard conversation before you were even conscious enough to be aware of it.

There’s no model of intelligence so over-simplified to the point of giving LLMs partial credit that wouldn’t also give equivalent credence to the “intelligence” of search engines.

1·7 months ago

1·7 months agoThis is not how LLMs work, they are not a database nor do they have access to one.

Please do explain how you think they make LLMs without a database of training examples to build a statistical model from.

The llm itself is just what it learned from reading all the training data,

I.e. “a model that encodes a database”.

They are a trained neural net with a set of weights on matrices that we don’t fully understand.

I.e., “we applied a very lossy compression algorithm to this database”.

We do know that it can’t possibly have all the information from its training set since the training sets (measured in tb or pb) are orders of magnitude bigger than the models (measured in gb).

Check out the demoscene sometime, you’ll be surprised how much complexity can be generated from a very small set of instructions. I’ve seen entire first person shooter video games less than 100kb in size that algorithmically generate hundreds of megabytes of texture data at runtime. The idea that a mere 1,000x non-lossless compression of text would be impossible is laughable, especially when lossless text compression using neural network techniques achieved a 250x compression ratio years ago.

2·7 months ago

2·7 months agoIt could be described that way, but it wouldn’t be a very apt metaphor. We aren’t simple, stateful input-to-output algorithms, but a confluence of innate tendencies, learned experiences, acquired habits, unconscious motivations, and capable of modifying our own thought processes and behavior on the fly to suit whatever best fits the local context. Our brains encode a model of the world we live in that includes models of ourselves and the other people we interact with, all built in realtime from our observations without conscious effort.

3·7 months ago

3·7 months agoYes doing an SAT test with the answer key isn’t intelligent because that’s in your “database” and is just a matter of copying over the answers. LLMs don’t do this though, it doesn’t do a lookup of past SAT questions it’s seen and answer it, it uses some process of “reasoning” to do it.

You’ve now reduced the “process of reasoning” to hitting the autocomplete button until your keyboard spits out an answer from a database of prior conversations. It might be cleverly designed, but generative models are no more intelligent than an answer key or a library’s card catalog. Any “intelligence” they appear to encode actually comes from the people who did the work to assemble the training database.

8·7 months ago

8·7 months agoHeck, I really like these. Architecturally, they’re a clear step forward from previous peer-to-peer networks like Retroshare.

6·7 months ago

6·7 months agoLLMs are statistical models of human writing, they only offer the appearance of intelligence in the same fashion as the Chinese Room thought experiment.

There’s nothing “intelligent” in there, just a very large set of instructions for transforming inputs into outputs.

A sufficiently advanced model of the human brain can be “intelligent” in the same way that humans are, but this would not be “artificial” since it would necessarily employ the same “natural” processes as our brains.

Until we have a model of “intelligence” itself, anyone claiming to have “AI” is just trying to sell you something.

5·7 months ago

5·7 months agoThis seems like circular reasoning. SAT scores don’t measure intelligence because llm can pass it which isn’t intelligent.

The purpose of the SAT isn’t to measure intelligence, it is to rank students on their ability to answer test questions.

A copy of the answer key could get a perfect score, do you think that means it’s “intelligence” is equivalent to a person with perfect SATs?

Why isn’t the llm intelligent?

For the same reason that the SAT answer key or an instruction manual isn’t, the ability to answer questions is not the foundation of intelligence, nor is it exclusive to intelligent entities.

You still haven’t answered what intelligence is or what an a.i. would be.

Computer scientists, neurologists, and philosophers can’t answer that either, or else we’d already have the algorithms we’d need to build human-equivalent AI.

Without a definition you just fall into the trap of “A.I. is whatever computers cant do” which has been going on for a while:

Exactly, you’re just falling into the Turing Trap instead. Just because a company can convince you that it’s program is intelligent doesn’t mean it is, or else chatbots from 10 years ago would qualify.

There is one goalpost that has stayed steady, the turing test, which llm seems to have passed, at least for shorter conversation.

The Turing Test is just a slightly modified version of a Victorian-era social deduction game. It doesn’t measure intelligence, but the ability to mimic a human conversation. Turing himself acknowledged this: https://www.smithsonianmag.com/innovation/turing-test-measures-something-but-not-intelligence-180951702/

11·7 months ago

11·7 months agoBethesda lost me for good when they thought Fallout 76 was ready for release and refused to give me a refund when it very much was not.

I charged back the purchase price and haven’t given them a penny since.

62·7 months ago

62·7 months ago“Big Bacteria” is a much more accurate descriptor of humans than “Artificial Intelligence” is of large language models.

This is the same problem we had with IQ testing, what the test measures is not “intelligence”, but the ability to retain and process information according to a predefined schema. This requires no intelligence at all, as demonstrated by the fact that a sufficiently large statistical model of human writing patterns can pass the SATs.

22·7 months ago

22·7 months agoI’m not familiar with that seedbox provider, but unless you have a virtual private or dedicated server then you’re limited to the applications the host has made available.

If you do have a private node with root access, then you’ll be able to install whatever you like. Generally, these are limited to a command-line interface, in which case you’ll need something like soulseek-cli, but higher-tier hosting packages often support remote desktop which would allow you to log in and install the graphical version of the app as normal.

344·7 months ago

344·7 months agoBig Autocomplete isn’t “AI”. This is not new technology, this is “We used buzzwords to hype up 20 year old EEG interpreters, please give us money”

It already is in the more expensive cities like Denver or San Francisco.

4·7 months ago

4·7 months agoNo problem! I’ve used this trick to run non-game Windows apps on the Steam Deck too, though support can vary wildly.

As an alternative, you might also check Lutris, which employs user scripts for installing and running Windows software in Linux. You can even add them to Steam so they’ll work in the Steam Deck’s gaming mode:

https://www.reddit.com/r/linux_gaming/comments/6s0pt7/launch_wine_games_from_lutris_on_steam/

2·7 months ago

2·7 months agoAdd the setup/installer executable as a non-steam game, run it to do the install, then modify the non-steam game’s settings to point at the installed executable so it can run from the directory where it is installed.

131·7 months ago

131·7 months agoIt might be nigh-universally accepted, but that lack of controversy doesn’t change a thing. It’s still a statement about identity and the relationship between groups of people, and therefore a political statement.

See also, Left 4 Dead’s “Director” system. https://left4dead.fandom.com/wiki/The_Director Pretty sute that qualifies as prior art.